Understanding AI unit economics: The missing piece to monetizing AI

Managing AI cost is no longer an optimization problem; it’s a survival issue.

For AI-native companies, burn rate scales with how much intelligence the product delivers. For SaaS companies adding AI, each new AI feature quietly reshapes a cost structure that once supported 80% gross margins. As one path quickly burns through venture capital, the other erodes the margin profile that made the business attractive in the first place. The failure mode is the same in both cases: shipping AI features without designing its economics. In agentic systems, cost doesn’t creep, it compounds.

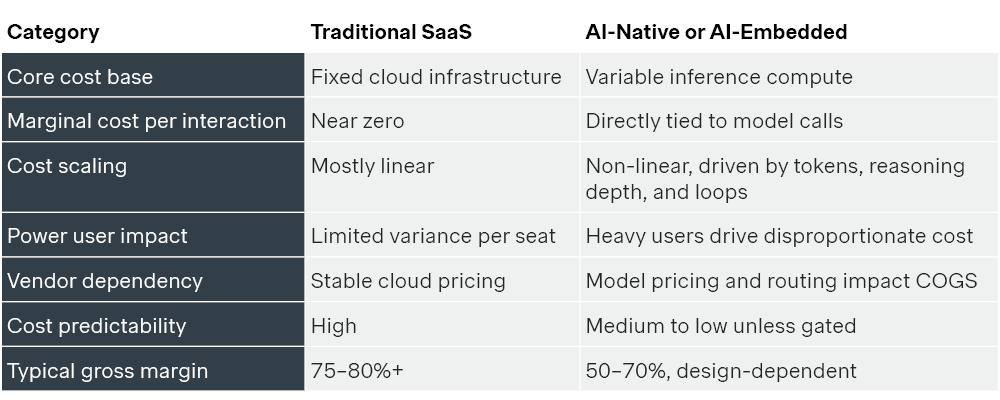

SaaS margins were structural, but AI margins are behavioral

For the past 20 years, SaaS pricing has anchored in two primary forces: economic value created and competitive alternatives in the market.

If a product delivered clear ROI and was competitively priced, this meant pricing decisions focused on how to segment customers and design packages to capture that value. Cost structure mattered but rarely dictated monetization architecture. Marginal costs were low, predictable, and improved with scale.

AI Agents follow a different logic:

AI introduces a third force: cost to serve.

Every inference, reasoning step, tool call, and retry consumes compute. As systems become more autonomous, customer behavior becomes tightly coupled to cost. Thus, the pricing equation fundamentally shifts in the age of AI.

The new pricing equation in the agentic era

The primary question is still “What is this worth to the customer?” But it can no longer stand alone. It must also include, “What does it cost to deliver intelligence reliably at scale?”

In agentic systems, heavy usage materially increases cost. A single open-ended workflow, an uncapped feature, or a poorly designed tier can quickly erode gross margins. Value still matters and it remains the anchor. But it must now be understood in the context of competitive alternatives and balanced against cost realities.

Modern AI pricing should balance three forces:

- Economic value created

- Competitive alternatives

- Cost to serve

When these forces align, growth and margin reinforce each other. When one is ignored, the model eventually breaks. Margin is no longer owned by a single function. It’s a cross-functional design challenge spanning product, engineering, pricing, and commercial strategy. To manage this change, leaders should understand what actually drives cost in AI.

Understanding the cost structure of AI

In traditional SaaS, cost to serve was predictable and improved with scale. In AI products, cost behaves differently: it grows with usage, increases with more complex workflows, and rises as systems take more steps on behalf of the user.

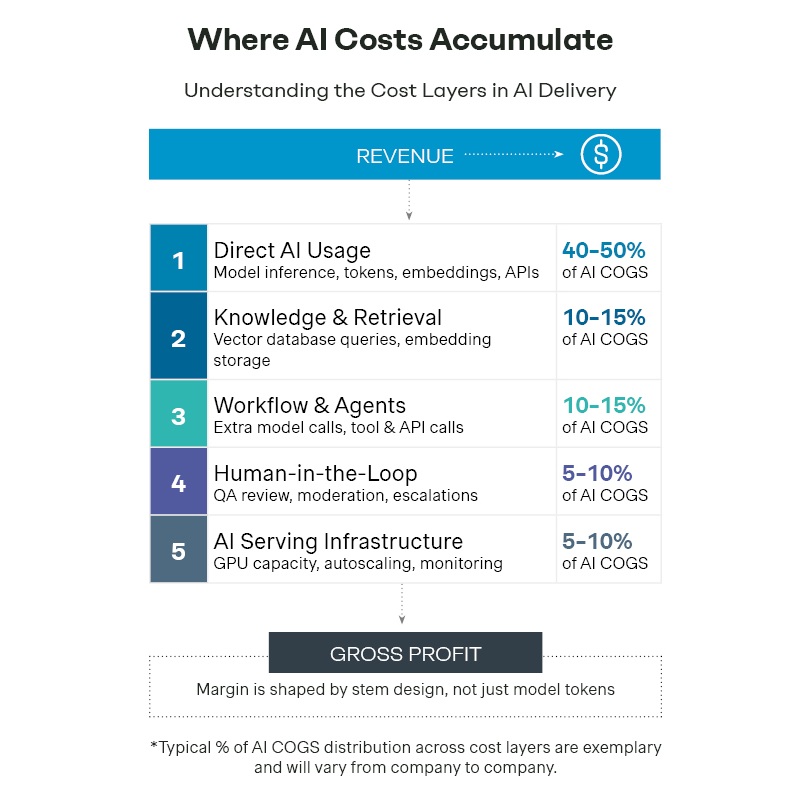

AI cost doesn’t sit in a single line item. While many teams focus on model tokens, cost actually builds across multiple layers of the delivery stack.

Direct AI usage (model layer)

The cost of calling the model includes model inference, tokens, embeddings, and other usage-based API charges from providers like OpenAI, Anthropic, and other leading LLM providers. These are typically the most visible and largest cost drivers.

Knowledge and retrieval (knowledge layer)

AI systems need context before generating a response. They must create embeddings, store them in vector databases, and retrieve relevant information at runtime. As products become more personalized, retrieval becomes a recurring part of COGS. Essentially, this allows the model to access correct information before responding.

Workflow and agents (workflow layer)

Modern AI systems increasingly operate as agents that execute multi-step tasks. Each step can trigger additional model calls, tool usage, API requests, or retry loops. As workflows become more complex, costs compound quickly. The key dynamic is multiplicative: more reasoning steps directly increase cost exposure.

Human-in-the-loop (human layer)

Many production systems still require human oversight to ensure reliability. This includes QA review, moderation, exception handling, and escalations.

AI serving infrastructure (infrastructure layer)

This layer is the foundation required to run AI systems on a scale. It includes GPU and CPU compute, storage, autoscaling, latency optimization, monitoring, observability, and safety systems. These costs become significant as usage grows.

The five structural margin levers in AI

AI margin is not managed in one place but designed across functions. In traditional SaaS, cost discipline largely sat with finance whereas AI businesses shape margin through product architecture, engineering decisions, pricing design, and commercial structure.

Five key structural levers determine whether an intelligence scale is profitable or expensive.

Model strategy and intelligent routing

Engineering-led. Product-informed. Finance-aware.

Model selection is the largest technical driver of cost to serve. Defaulting every workflow to the most powerful model is economically unsustainable.

High-performing teams match model cost to task complexity:

- Lightweight models for repetitive tasks

- Mid-tier models for nuanced reasoning

- Premium models only where quality affects customer value

This is about cost-value alignment, not cost reduction. It requires cross functional alignment:

- Product defines acceptable output quality

- Engineering implements routing logic

- Finance models cost exposure

Workflow depth and execution control

Product and engineering-owned. Finance-modeled.

In AI systems, cost compounds with execution depth. A single user request can trigger:

- Multiple reasoning loops

- Tool calls

- Retries and fallbacks

Autonomy is a vital component, but without boundaries it becomes volatile. High-performing companies put guardrails in place, such as loop limits and retry ceilings to circumvent this issue. These are product decisions with direct financial impact. If one power user can distort margins, the system is fragile.

Packaging: Tier intelligence, not just features

Product and pricing led. Cost-validated by finance.

In AI, packaging is not just about feature differentiation; it’s also a cost control mechanism. The first decision is where AI sits:

- Embedded within core plans

- Monetized in premium tiers

- Sold as separate add-ons

The goal is to strike the right balance between accessibility and control: driving usage without eroding margins or losing competitiveness.

Beyond feature placement, intelligence itself should be tiered by:

- Model access by plan

- Context length limits

- Automation depth restrictions (e.g., generative vs. fully autonomous)

- Usage caps tied to workflow volume

When AI capabilities are bundled into flat plans without guardrails, margins erode over time.

Pricing: Align the price metric with cost to serve

Pricing-led. Finance-validated. Product-enabled.

Per-seat pricing breaks in AI. One user can generate 10 to 20 times more cost than another under the same license.

As a result, many AI companies are shifting toward:

- Activity based pricing (e.g., per task, per workflow, per API call)

- Outcome based pricing (e.g., per resolution, result)

- Hybrid models (e.g., base fee plus usage or overages).

However, not all usage-based or value-based pricing is aligned with cost.

Pricing works well when:

- The price metric scales with underlying compute drivers (e.g., tokens, workflows, agent runs)

- Usage and cost have a consistent relationship

- Heavy usage is bounded through limits or overages

Pricing breaks when:

- The value metric is disconnected from cost (e.g., pricing per outcome when workflows vary in complexity)

- A small number of users drive disproportionate usage

- High-cost features are bundled without clear limits

For example, pricing per “task completed” works when tasks are similar. It breaks when some tasks require 10 times more reasoning, context, or retries.

Commercial terms: Transfer volatility deliberately

Finance and sales owned. Structured with pricing.

AI cost curves are inherently volatile due to changing model prices, usage spikes, and increasing agent complexity.

Enterprise contracts must reflect this reality:

- Usage bands that define expected consumption ranges

- True-ups to reconcile actual usage against contracted levels

- Overage triggers that charge for usage beyond agreed limits

- Modeling pricing clauses to account for changes

The key question: who absorbs cost volatility, the company or the customer? Well-structured agreements share this risk. These five levers are interconnected, and when they operate in coordination, AI scales in a durable and predictable way.

The real shift in AI monetization

SaaS margins improve with scale. In AI, margins improve only when intentionally designed. Because cost is tied to usage and behavior, growth alone doesn’t guarantee margin expansion.

That’s why the key questions are changing:

- Are we scaling revenue, or scaling cost, at the same time?

- If model prices increase by 30%, does our model still work?

- Do we understand margins at the feature and segment level?

Growth can hide these issues for a while, but the economics always catch up. In the next article, we’ll introduce the AI Profitability Risk Matrix, a practical way for founders, CFOs, and investors to assess whether an AI business is built to scale or quietly accumulating risk.